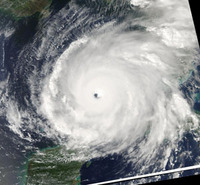

Hurricane Rita, on its way across the

Gulf of Mexico in September 2005.

Image courtesy: Liam Gumley/UW-CIMSS

Hurricane models are continuously upgraded and refined by mathematicians like Dr. Michael Navon at SCS. He and his FSU group, and collaborators across the US, are working on improving the mathematical foundation of the models. One of the questions Dr. Navon is currently working on is data assimilation. Simply speaking, this is about how to update your model with new data from observations, and about how much you should trust new measurements compared to the well-founded data from the model, which is based on thousands of earlier measurements.

DOES REALITY COUNT? We all know that if the map differs from reality, reality counts. But if a measurement of say wind, humidity, or temperature - all of which are important factors in hurricane prediction - differs from what your model predicted, then what do you do? The new data could very well contain important information about an unexpected turn in the hurricane trajectory, or an increase in strength. However, it could also be the result of an inaccurate measurement, a broken buoy, or simply be within the range of normal variation at that spot in the atmosphere. Scientists thus need to be able to balance the predicted value, which is based on thorough research, with the actual observations at each moment.

Dr. Navon is working with a calculus method called Kalman filters, which is a set of mathematical equations that provides an efficient computational (recursive) means to estimate the state of a process, in a way that minimizes the mean of the squared error. The filter is very powerful in several aspects: it allows estimations of past, present, and even future states, and it can do so even when the precise nature of the modeled system is unknown. It does so in a way that minimizes variance and gives the best possible state for the computer model for the next prediction of updated forecasts.

PROFESSOR KALMAN Rudolf E. Kalman, who is Hungarian by birth, is a professor emeritus from University of Florida. He started to develop the Kalman filters in 1959. Since that time, due in large part to advances in digital computing, the Kalman filters have been the subject of extensive research and application, particularly in the area of navigation. They have been used on spacecrafts like the Apollo, but they have been difficult to use in weather models because they demand an unreasonable amount of computer resources.

Dr. Navon and his colleagues at CIRA, Colorado State, are working on a modification, called Ensemble Kalman Filters (EnKF). EnKF is a sophisticated sequential data assimilation method. It applies an ensemble of model states to represent the error statistics of the model estimate and it applies ensemble integrations to predict the error statistics forward in time. This was first proposed in 1994 by Dr. Geir Evensen, Nansen Environmental and Remote Sensing Center, Bergen, Norway. The modification will bring down the computational complexity, and eventually enable the useful Kalman filters to find wider use in numerical weather prediction.

An ensemble Kalman filter (EnKF) has already been implemented operationally for atmospheric data assimilation in Canada. It assimilates observations from a fairly complete observational network with a forecast model that includes a standard operational set of physical parameterizations and obtains reasonable results. More information on the Canadian research here.